AI platform for building autonomous companies

The future of companies is autonomousDescribe the work

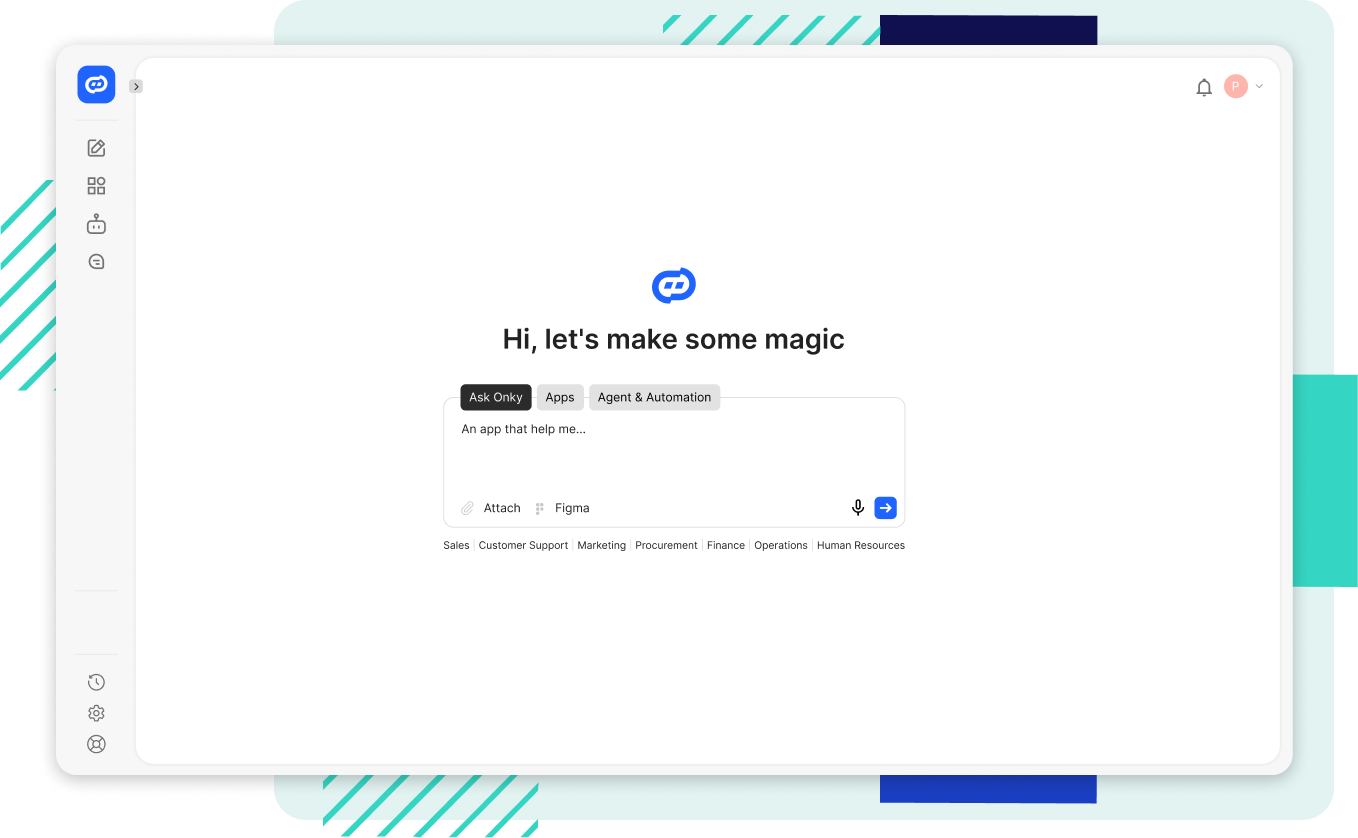

Chat with AI to automate workflows. Onky connects to your company knowledge, decides how to execute tasks, and autonomously builds the tools your team needs.

From raw intent to governed execution.

01. Universal Intent Input

Describe your goal in plain language for the Kernel to map against system logic and security parameters.

02. Kernel Orchestration

The system autonomously resolves dependencies and verifies compliance before connecting to internal resources.

03. On-the-Fly Assembly

The OS synthesizes just-in-time apps and persistent tools, initializing the necessary drivers to bridge your data with the task.

04. Execute Autonomously

Deploy autonomous agents or full-scale AI apps as native system processes that operate within kernel-enforced boundaries.

Designed for IT control and business delivery speed.

Build with Agents

A Code Agent builds native system drivers. Describe what you need — the OS handles compliance, connectors, and deployment.

- Natural language → production code

- Compliance checked at build time

- Model-agnostic drivers

Deploy & Distribute

Applications run natively inside OnkyOS. Distribute through Your Apps — a governed internal launcher with role-based access.

- Deploy once, govern everywhere

- Role-based access control

- Native system integration

Enforce Policy

A default-deny control plane with function-owned policy gates. Security, Compliance, Legal, and Architecture each own their gate.

- Default-deny by design

- Function-owned gates

- Full audit trail

Governance isn't a layer. It's the architecture.

Every action through the Kernel

All builds, deployments, and model calls logged end-to-end. No shadow operations.

Connectors governed by default

Integrations register as system drivers via MCP. No rogue API calls.

Agents within boundaries

AI operates within kernel-enforced policy. The system grows with every driver — under your control.

Full audit at the OS level

Who did what, when, with which model. Answer compliance questions instantly.

Inside the Agentic World

Unlike a standard SaaS 'workspace' which is a static collection of tools, the platform is a dynamic orchestration layer. It features an Agentic Kernel that manages the entire AI lifecycle—integrating models, data, and decision-making processes into a unified ecosystem that connects directly to core enterprise resources like SAP or Salesforce.

Onky.ai operates as a unified Agentic AI Platform for Autonomous Companies. At its heart lies the Agentic Kernel, which enables Conversational App Synthesis - allowing your team to build, connect, and orchestrate autonomous agents and software through natural language. By utilizing a centralized control plane and the Model Context Protocol (MCP), the system ensures that every interaction is secure, governed by automated policy enforcement, and seamlessly integrated across your entire enterprise infrastructure.

Yes. The platform is a programmable environment where users can build custom software, specialized AI apps, and background agents. Once created, these assets are immediately indexed by the Agentic Kernel as native system capabilities, allowing the platform to deploy and orchestrate them alongside core infrastructure.

Users extend the system via the Model Context Protocol (MCP). The platform treats these user-built tools as System Drivers; once a tool is registered via MCP, the Kernel indexes the capability and can immediately orchestrate it as part of a larger automated process.

The platform features a programmable infrastructure loop. As users build new tools or the Kernel synthesizes new apps, these assets are registered as native system capabilities. The result is a self-evolving platform that becomes permanently more powerful and specialized with every task it executes.

Shadow operations occur when AI calls are made outside of governed channels. In this platform, every model call, build, and deployment is logged at the Kernel level. All connectors are governed by default via MCP, ensuring that AI operates strictly within kernel-enforced policies and full auditability.

For organizations requiring maximum data sovereignty, the platform supports on-premise and VPC (Virtual Private Cloud) deployments. This ensures that the Kernel, the app synthesis engine, and all sensitive data processing remain entirely within your controlled infrastructure, isolated from the public cloud.

Access to the platform is $50 per seat, which includes 100 Kernel checkpoints—units of logic modification or prompt updates used to evolve system behavior. Managed hosting for your synthesized apps and agents starts at just $1 per month.